You’ve undoubtedly used ChatGPT at some point to either receive easier explanations of complex issues, draft a quick email, or for a quick brainstorming session.

But do you not know that GPT (Generative Pre-trained Transformer) is the brain behind ChatGPT? It’s this technology that’s behind the revolutionary tool.

Given its remarkable capabilities, you might consider developing custom GPT solutions tailored to meet your unique business needs—whether it’s automating internal reporting, aiding market research, or generating massively tailored customer responses.

Or maybe you want to make a commercial solution like an AI-powered customer service bot or a content creation platform for other enterprises. The big question is: Is it really possible to build a custom GPT model tailored to your requirements?

The answer is a resounding Yes! This blog guides you through how to create a bespoke GPT model for your business use cases. To ensure your model meets your goals, we’ll discuss the process, essential factors, and best practices.

No matter whether you’re building in-house solutions or launching a commercial one, you’ll have all the insights needed to get started.

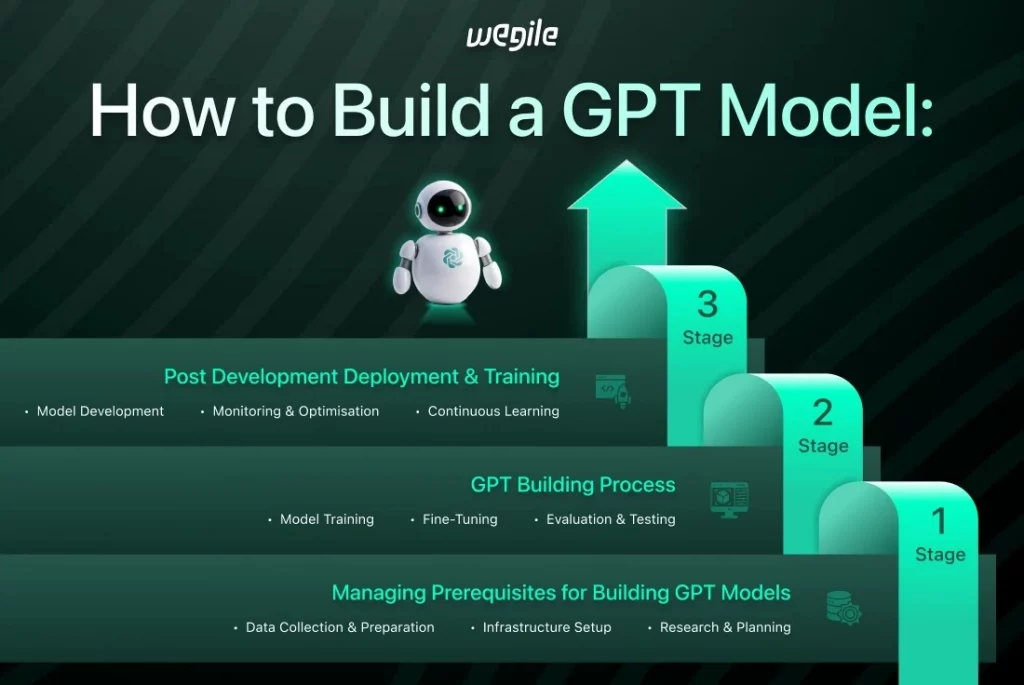

How to Build a GPT Model: 3 Stages

Building a GPT model is a structured process that consists of three main stages:

Stage 1: Managing Prerequisites for Building GPT Models

Stage 2: GPT Building Process

Stage 3: Post-Development- Deployment & Training

In the later sections, we will look deeply into these stages. Before that, let’s understand what GPT is.

What is GPT?

Generative Pretrained Transformers (GPT) is an artificial intelligence language model designed to understand and generate human-like text based on input data. Breaking each element of “GPT.”

G: Generative

“Generative” is the model’s ability to create new content. A generative model like GPT can produce text, images, or other data depending on what it learned during training, unlike typical AI models that classify images or forecast values. GPT specifically excels at generating human-like text.

For example, GPT can generate a full story from a prompt like “Write a story about a dragon.” This demonstrates its generative ability.

P: Pretrained

“Pretrained” indicates that the model has been trained on a huge dataset before being employed for specific tasks. This means that GPT has learned patterns, structures, syntax, and some general world knowledge from a vast corpus of text data (books, webpages, and other publicly available text).

Pretraining teaches the model to anticipate the next word in a sequence through unsupervised tasks. Pretrained GPT can be fine-tuned on individual datasets or tasks to improve its performance in applications like sentiment analysis and question answering.

T: Transformer

“Transformer” is the deep learning architecture that runs GPT. This neural network is ideal for language and other sequence-based tasks. Transformers process input data in parallel and employ self-attention mechanisms to understand associations between different words in a sentence, irrespective of position.

The self-attention mechanism allows the model to focus on the most relevant aspects of the input. This makes transformers ideal for handling difficult language tasks. Transformers can efficiently interpret large text sequences without sequential processing, unlike previous neural network models.

Thus, GPT has changed how humans interact with AI systems by performing tasks like generating text, summarising, translating, and generating code. Keep reading as we now explore its origins, main characteristics, and how it works.

Origins of GPT

GPT was introduced by OpenAI, a research organisation invested in building safe and powerful AI tech. Elon Musk and Sam Altman’s OpenAI launched GPT-1 in 2018.

GPT was designed to generate human-like text utilising massive amounts of data and a model that could generalise (the model’s capacity to perform well on new data that it was not trained on) across a range of linguistic tasks.

OpenAI trained the GPT model using a massive corpus of internet data without task-specific training, making it capable of performing various language tasks with minimal fine-tuning.

With subsequent iterations such as GPT-2, GPT-3, and now GPT-4, OpenAI has pushed beyond the boundaries of natural language understanding and generation, changing the landscape of AI research and development.

How GPT Works

GPT utilises transformer architecture and neural networks to process and generate text, offering a robust and efficient foundation that enhances its overall capabilities. GPT relies on transformers for sequence-to-sequence tasks like interpreting languages, summarisation, and text generation.

The model identifies relationships in extended text sequences using transformer layers that analyse input data in parallel. Transformers are effective because of the self-attention mechanism, which allows the model to weigh the value of a variety of words in a phrase regardless of their position.

For example, in the line “The dog chased the ball,” GPT doesn’t simply focus on the words near together (e.g., “dog” and “chased”) but also comprehends the link between “dog” and “ball” across the sentence. During training, GPT absorbs information from large text datasets, acquiring knowledge of facts, reasoning, cultural nuances, and grammar.

Once trained properly, it can summarise articles, answer queries, and anticipate the most likely word sequences from the input prompt. Essentially, GPT works by predicting the next word in a sequence and utilising its knowledge of language patterns to create meaningful, contextually appropriate content.

GPT Models Till Now

Over time, GPT has grown from a simple language model to a powerful AI powerhouse. This timeline highlights GPT’s journey from its first release to its newest innovations.

- GPT-1 (2018): The first iteration of GPT served as a proof of concept. It showcased the possibilities of transformer-based models. It had 117 million parameters and showed that unsupervised learning on massive data sets could result in a model capable of completing tasks such as generating text and predicting sentences with little fine-tuning.

- GPT-2 (2019): GPT-2 took a huge advancement with 1.5 billion parameters. The fact that GPT-2 could generate cohesive, contextually appropriate content across paragraphs changed everything. It could produce essays and even stories with ease. OpenAI initially delayed its release owing to concerns relating to its misuse.

- GPT-3 (2020): GPT-3 stunned the AI world with its outstanding 175 billion parameters. It can translate languages, summarise text, construct extensive, context-aware text, and answer complex questions. This version raised the bar for natural language production, with APIs that enabled developers to integrate GPT-3 into a variety of applications.

- GPT-4 (2023): GPT-4 introduced several significant enhancements, including increased accuracy, reasoning abilities, and the capacity to process multimodal inputs (such as text and graphics). GPT-4 is better at nuanced understanding, making it excellent for complicated language comprehension and logical reasoning tasks.

Benefits and Limitations of Different Models

Each GPT iteration improved text production and interpretation, but they still have issues.

- GPT-1: Introduced the concept of unsupervised learning with transformers, but it struggled with coherence over long passages.

- GPT-2: Major improvements in coherence and creativity, but it still had limitations in handling ambiguity and required fine-tuning for specific tasks.

- GPT-3: GPT-3’s 175 billion parameters allowed it to produce high-quality responses, but it struggled with factual mistakes, nuanced understanding, and consistency in longer writings.

- GPT-4: It has gotten better at reasoning, understanding, and multimodal inputs, but it still has a hard time with biases in training data and unusual edge cases, and it requires a lot of computing power.

GPT vs. Other Models

When it comes to generative AI, GPT isn’t the only player in the game. Models like BERT (Bidirectional Encoder Representations from Transformers) and T5 (Text-to-Text Transfer Transformer) also use transformer-based architecture but focus on different objectives.

- GPT (Generative Pretrained Transformer) excels in text generation, using unsupervised learning to predict the next word in a sequence. This makes it particularly strong in tasks like story generation, code completion, and conversational AI.

- BERT is more task-oriented and designed for tasks like question answering and sentiment analysis. Unlike GPT, which reads text from left to right, BERT processes text in both directions (bidirectionally), making it excellent at understanding the context of a word in a sentence. However, BERT isn’t as good at generating text as GPT.

- T5 is similar to GPT in that it can be fine-tuned for a wide variety of tasks, but it treats every task as a text-to-text problem. For instance, T5 can take a question and generate an answer, but it’s designed to work across many different language tasks (e.g., summarisation, translation).

What sets GPT apart is its flexibility and scalability. It is not limited to a specific task but can be used for anything from casual conversations to specialised applications. While other models like BERT or T5 excel in specific areas.

Benefits of GPT

- Versatility Across Tasks: GPT excels in many NLP tasks even without specific task-driven training. It can generate content, summarise, translate, and answer queries, making it a one-stop solution for automating language-based activities.

- Efficiency in Content Generation: Businesses can easily create marketing, customer support, and social media content. GPT speeds up content generation and minimises writing and editing while retaining quality. This is especially important in businesses such as advertising, publishing, and digital media.

- Cost and Resource Efficiency: Its capacity to be fine-tuned using smaller, task-relevant datasets enables organisations to skip large-scale training. Pre-trained models can be adapted, saving deployment time and conserving processing resources. This allows smaller enterprises to access advanced AI capabilities without incurring substantial infrastructure expenses.

- Real-Time Interactions and Scalability: In customer service applications, GPT allows firms to scale without compromising response time or quality. GPT’s scalability makes it excellent for high-demand scenarios like managing multiple client queries or conversations.

- Improvement Over Time: GPT models can constantly improve with fine-tuning and retraining. As businesses gather more data, they can enhance accuracy and relevance by tailoring the model to their specific industry needs. This adaptability keeps the model up-to-date with changing trends and user expectations.

Use Cases of GPT

- Customer Support Automation: GPT-powered chatbots enhance customer support by providing instant, accurate, context-aware responses. GPT handles numerous client requests without human intervention in communication and e-commerce, enhancing efficiency and minimising the need for large customer care personnel.

- Content Creation for Marketing: Marketers use GPT to create blog posts, newsletters, and social media content. GPT automates writing so content teams can concentrate on strategy and creativity, improving productivity by preserving quality. Automation benefits companies such as content agencies and e-commerce enterprises in terms of both speed and size. Businesses usually partner up with leading eCommerce app development companies to leverage the advantages of AI for their growth.

- Text Summarisation for Legal & Medical Industries: Professionals in law and medicine often need to summarise intricate documents. GPT swiftly extracts lessons from lengthy legal contracts, medical research articles, and regulatory documents. This allows legal teams and healthcare experts to save time and make more informed decisions without having to navigate through lengthy documents.

- Market Analysis & Sentiment Analysis: GPT can use vast amounts of customer feedback, social media posts, and reviews to evaluate sentiment and market trends. Companies rely on GPT for brand monitoring, which enables them to modify marketing plans, track customer sentiment, and discover emerging concerns in real-time.

- Multilingual Translation and Localisation: Translating text across languages with contextual integrity is common with GPT. Global businesses use GPT to localise their websites, goods, and communications in several languages, ensuring that local audiences understand the translated version of text while preserving its original intent.

Technologies and Frameworks for Building GPT Models

Tool/Framework | Description |

Python | Primary programming language for AI development, used for model building. |

PyTorch | A deep learning framework commonly used to implement GPT models. |

TensorFlow | Another popular deep learning library, often used for large-scale GPT models. |

Hugging Face Transformers | A library with pre-trained models and easy interfaces for fine-tuning GPT. |

OpenAI API | Provides access to GPT models through an easy-to-use interface for development. |

Google Colab | A cloud-based Jupyter notebook environment ideal for experimenting with models. |

Docker | Used for containerization, ensuring consistent environments across systems. |

Kubernetes | A system for automating the deployment, scaling, and management of GPT models. |

MLflow | A platform for managing the machine learning lifecycle and tracking experiments. |

The Process of Building GPT Models

Each stage requires thoughtful planning, resource allocation, and technical expertise to ensure success. In this section, we will explore the first step, which is setting the right foundation before diving into model construction and deployment.

Stage 1: Managing Prerequisites for Building GPT Models

Make sure you’re well-prepared before you consider diving into model development. This stage involves acquiring resources, comprehending basic technologies, and establishing infrastructure.

A thorough understanding of fundamental topics such as deep learning, LLMs, neural networks, NLP, and generative AI is essential. The model you create will be highly influenced by the data you have available and the tools at your disposal.

These technical considerations are tricky to deal with. However, partnering with the right AI app development company can simplify the process. A skilled team can help you navigate these technical issues, letting you focus on your GPT model’s strategy without learning AI development.

This strategy is especially effective if you lack in-house knowledge or require professional assistance in developing and deploying your model.

Tools to Have Handy for Building a GPT Model

Tools | Details |

Programming Language | Python is the go-to language for AI development. It’s versatile, widely used, and has extensive support for AI libraries. |

Libraries/Frameworks | PyTorch and TensorFlow are essential for deep learning. The Hugging Face Transformers library is a must-have for NLP tasks. |

Datasets | You’ll need large, diverse datasets such as OpenWebText or Wikipedia. If possible, curate custom datasets to match your task. |

Hardware | GPUs (like Nvidia V100 or A100) and TPUs are necessary to handle the immense computational load during model training. |

IDEs/Notebooks | Jupyter and Google Colab are ideal for experimentation, as they allow for real-time code execution and visualisation. |

Version Control Tools | Git is ideal for managing code and collaborating with team members. |

Cloud/On-prem Resources | Decide whether you’ll rely on cloud services (AWS, Google Cloud, Azure) or on-premise hardware for your computational needs. |

Factors to Consider When Choosing the Right GPT Model

Even more important than deciding what kind of GPT to construct is picking the right model architecture. Understanding the factors that affect project performance can help you avoid problems.

- Data Availability: The foundation of every GPT model is reliable data. Model training requires massive textual data. This data must be rich, diversified, and bias-free to ensure model fairness. Use OpenWebText (a publicly available recreation of the WebText corpus) or create custom datasets to customise the model. Data selection should always prioritise ethical considerations, such as removing bias and misrepresentation.

- Computational Resources: GPT models are computationally intensive; thus, knowing your computational requirements beforehand saves time and money. Consider GPUs or TPUs according to your budget. GPUs (like the Nvidia V100 or A100) and TPUs can speed up training but come at a cost. If your budget is tight, AWS or Google Cloud can be a cost-effective alternative to on-premises infrastructure. Training time is also an important consideration; make sure to schedule your resources adequately to avoid delays.

- Desired Use Case: GPT models vary; thus, identifying your goals will guide your progress. Designing a GPT that works effectively requires knowing the task, whether you’re building a model for text production, summarisation, or question answering. Text generation may benefit from a larger dataset with different writing styles, whereas summarisation can benefit from a more concentrated, high-quality dataset.

- Ethical Considerations: AI models are strong, but they also have ethical implications. Without proper training and filtering, your GPT model could provide biased content. Use ethically sourced data to discover and minimise model prediction biases. Data privacy and fairness should be major considerations during your training procedures.

- Pretrained Model Availability: Training a GPT model from the start takes time and resources. Based on your use case, you can opt to use pre-trained models like GPT-2 or GPT-3, which can be fine-tuned for your specific task. This saves time and computational resources and yields excellent results. However, developing from scratch provides you more control over the model’s design and can lead to higher performance for dedicated tasks.

Setting Up the Environment

Now that you have all the tools and resources, it’s time to set up your development environment. This requires installing libraries, maintaining dependencies, and setting up version control systems.

- Installing Libraries: Install PyTorch, TensorFlow, and Hugging Face Transformers. Conda or virtual environments are strongly advised for managing dependencies without leading to version conflicts.

- Version Control: Git is the most popular version control system. Use Git or GitHub for your project to streamline collaboration and code version management. You can also utilise systems like GitLab to host and review code.

Once your environment is ready, you can confidently start building and fine-tuning your GPT model, knowing that you have the essential tools and infrastructure in place.

Stage 2: The GPT Building Process

Creating a GPT model is tough and involves multiple steps. After laying the groundwork, the process of building the model begins. This step includes data preprocessing, model architecture selection, training, and performance evaluation.

Preprocessing Data for GPT Models

Preprocessing is an important step in creating GPT models. Data is collected, cleaned, and tokenised to make it suitable for your model to learn from.

Data Collection

You need a large, high-quality dataset for your model to work. Public datasets like OpenWebText, Wikipedia, and news articles or, if available, proprietary data specific to your domain, can be leveraged, depending on your use case.

The model can also be customised using internal data from your systems (customer interactions, product descriptions, etc.) To ensure comprehensive model coverage, acquire a diverse set of data that reflects different language patterns, genres, and styles.

Data Cleaning

After collecting data, cleansing it is crucial. Remove irrelevant material, rectify typos, and filter out noisy data, including broken sentences, out-of-context words, and duplicate content.

Erroneous sentences, such as “The quick brown fox jumps over the lazy dog,” should be omitted. Since poor data affects model performance, only relevant and high-quality content should be kept.

Tokenization & Feature Selection

After cleaning the data, the next step is to prepare it for the model by tokenising the text into manageable units. Common tokenisation techniques used in NLP tasks include Byte Pair Encoding (BPE) and SentencePiece.

These approaches break words into subwords, enabling the model to effectively handle rare or previously unseen words. To make sure the data matches the model design, choose features like text length, token frequency, and syntactic structures.

Handling Noisy Data

Managing noisy data is a constant challenge during preprocessing. Noise in technical datasets can contain irrelevant content, outliers, technical jargon, and incorrect language.

Filtering text from unreliable sources and utilising automated tools to detect and delete data anomalies are among the practical solutions.

Building Your GPT from Scratch

Model Design

Setting up the framework for your GPT model is a critical step. Key factors such as the number of transformer layers, attention heads, and embedding size play a significant role in shaping the model’s performance.

The number of layers and attention heads allows the model to capture complex patterns, but they also add to the computational load. You must balance model complexity and resource constraints based on dataset size and computing budget.

Transformers Breakdown

The Transformer architecture is fundamental to GPT models. We saw earlier how the self-attention mechanism helps the model focus on context and the relationship between distant words, unlike traditional recurrent networks.

Attention heads are important in this process because they look at the incoming data from diverse perspectives, helping the model to capture richer patterns.

Hyperparameter Tuning

The next step after completing the architecture is to fine-tune the hyperparameters. This includes adjusting parameters such as the learning rate, batch size, and optimisation approaches.

In addition to grid search and random search, Optuna can assist you in locating the optimal model hyperparameters. Hyperparameter adjustment is critical since even minor changes can have a big impact on the model’s performance.

Memory-Efficient Architectures

Memory efficiency is crucial for larger GPT models. Large-scale models can be managed better using model parallelism (dividing the model across numerous devices) or gradient checkpointing ( also known as activation checkpointing, which saves memory during backpropagation). These tactics ensure that you do not run out of memory while still achieving great model performance.

Evaluating Model Performance

The next step after building the model is to evaluate its performance using appropriate metrics. These metrics help determine whether the model is achieving its goals and how it can be improved.

Key Metrics

Perplexity and accuracy are crucial evaluation metrics for generic NLP tasks. Perplexity indicates how effectively the model guesses the next word in a sequence, while accuracy measures how often the model returns the correct output.

For specific tasks like text generation or translation, BLEU (for machine translation) or ROUGE (for summarisation) scores are frequently employed to determine how closely the created text resembles a reference.

For classification tasks, precision, recall, and F1-score are better indicators of model performance. Make sure you select the appropriate metrics for your model’s intended use.

Cross-validation

K-fold cross-validation divides your dataset into many folds and trains the model on diverse subsets to ensure model robustness. This method reduces overfitting and improves model performance reliability.

Error Analysis

Analyse errors after model evaluation. This can improve your dataset, model, or focus on areas of concern. If the model produces redundant information or misinterprets context, this feedback can help refine tokenisation or improve the quality of the training data.

Tips and Trade-offs

Several trade-offs must be considered when building and evaluating your GPT model. These factors are critical in determining the overall model design.

Model Size vs Computational Cost

Larger versions like GPT-3 are powerful but computationally expensive. Model size drastically increases training time, memory, and infrastructure. Despite having less capacity, smaller models like GPT-2 are cheaper and faster to train. For budget-constrained projects, model size must be balanced against computational resources.

Choosing Tokenization Strategies

The tokenisation strategy can greatly affect model performance and efficiency. BPE subword tokenisation is more efficient and handles rare or unseen terms better, but it increases complexity. Try multiple tokenisation approaches to find the best fit for your dataset and goals.

Handling Overfitting and Underfitting

Overfitting and underfitting are common challenges when training ML models. Regularisation techniques like dropout layers reduce overfitting by reducing feature dependence. Make sure your model is complex enough to catch data patterns to avoid underfitting.

Fine-Tuning GPT Models

Fine-tuning allows you to adapt a pre-trained GPT model to your specific task, improving its performance for targeted applications.

Transfer Learning

Transfer learning adapts GPT-2 or GPT-3 models to a task-specific dataset. This allows you to apply knowledge learned from large-scale training without having to start from the beginning. Fine-tuning can be done on tasks such as sentiment analysis, text summarisation, or domain-specific language modelling.

Task-Specific Adjustments

Adjust the architecture and train a model on a domain-specific dataset to fine-tune it for specific applications (like question-answering or chatbot applications). For example, if you’re creating a medical chatbot, you can fine-tune GPT-3 using a curated dataset of medical dialogues to guarantee that the model provides contextually relevant responses.

Transfer Learning in GPT Models

Transfer learning is an effective strategy to reduce training time and resources used while enhancing model performance.

Fine-Tuning Pre-Trained Models

Fine-tuning is the process of tailoring a pre-trained model (such as GPT-2 or GPT-3) to a specific task or domain through training on a smaller, task-specific dataset.

Here, instead of starting from scratch, you’re leveraging the model’s extensive training on large datasets. Fine-tuning allows the model to easily adapt to new domains, such as medical text, legal language, and customer service interactions, without requiring much processing power.

Strategies for Effective Fine-Tuning

To fine-tune successfully, consider these best practices:

- Small, Domain-Specific Datasets: Fine-tuning is most effective when the additional training data is both specialised and directly relevant to the specific task. For example, if you’re developing a chatbot for a legal service, focus on training the model with language from legal papers, court opinions, and legal consultations.

- Gradual Learning: Do not “overtrain” a pre-trained model when fine-tuning. Gradually change hyperparameters and learning rates to maintain model generalisation. A high learning rate may result in overfitting, whereas a low rate may cause the model to learn too slowly.

- Layer-Specific Tuning: In many cases, you don’t need to fine-tune the whole model. You may opt to adjust only the later layers of the neural network while keeping the lower layers frozen. This can save computational resources and accelerate the process, especially if the basic model already recognises general linguistic patterns.

Fine-Tuning for Specialized Tasks

Transfer learning allows you to easily tailor the GPT model for a variety of specialised tasks, such as:

- Text Summarisation: Fine-tune the model on a dataset of articles paired with summaries to improve its ability to generate concise, coherent summaries of long texts.

- Sentiment Analysis: Train the model on a dataset of labelled text indicating sentiment (positive, negative, neutral) to enhance its ability to classify the tone of new text inputs.

- Domain-Specific Applications: Training the model using a domain-specific corpus can improve performance for highly specialised jobs like technical support or legal guidance.

3. Post-Development – Deployment & Training

The next critical step after GPT model development and testing is deployment and training on real-world data. This ensures that your model is practical, performs well after production, and operates efficiently.

In this section, we will walk you through the steps involved in deploying your model, fine-tuning it with your data, and optimising its performance for large-scale production tasks.

How to Train a GPT Model

Step-by-Step Guide

Data selection and model initialisation are the first two steps in training a GPT model. First, choose a high-quality, diverse dataset for your task. After preprocessing and tokenising data, initialise the model. This usually involves customising GPT models’ transformer architecture (layers, attention heads) and training environment. Start small and increase batch size as the model learns.

Hardware Utilization

Using GPUs or TPUs efficiently speeds up training. A best practice is to incorporate multi-GPU systems to further enhance processing power and efficiency. Distributing the GPU load allows for faster processing of larger batches, reducing training time. Distributed training frameworks like TensorFlow and NVIDIA’s CUDA make it easier to manage multiple GPUs efficiently.

Using Pre-trained Models for Quick Start

Models don’t always need to be built from scratch. Hugging Face’s model hub offers pre-trained models that you can quickly fine-tune to meet your needs. By starting with a model already trained on large datasets, you can leverage its foundational knowledge and accelerate the fine-tuning process for task-specific requirements.

Techniques for Optimising Training Time

Model Parallelism

When working with large models, memory constraints can become a bottleneck. Model parallelism involves splitting the model across multiple GPUs. Each GPU handles a portion of the model’s layers, thus distributing memory usage and improving overall training efficiency. This technique can drastically reduce training time for models with billions of parameters.

Data Parallelism

In contrast to model parallelism, data parallelism distributes the data itself across multiple GPUs. Each GPU processes a different batch of data and then aggregates the results to update the model weights. This approach is ideal when you have large datasets and sufficient computational resources. It allows you to scale up training without modifying the model architecture.

Gradient Accumulation

GPUs can struggle to handle huge batch sizes while training large models, as they need significant memory. Gradient accumulation is a technique that calculates gradients over numerous smaller batches and updates them after a few iterations. This lets you simulate larger batches without overburdening your hardware. It’s especially helpful when using smaller GPUs or when training on limited hardware resources.

Mixed Precision Training

Mixed precision training involves utilising lower precision numbers (e.g., FP16 rather than FP32) during training. This decreases memory usage and speeds up computations, allowing you to analyse more data in less time while maintaining accuracy. Many frameworks, like TensorFlow and PyTorch, offer mixed precision training, which is an excellent means to optimise training speed.

Training GPT Models on Your Own Data

Fine-tuning with Custom Datasets

Once the base model is trained, fine-tuning it on your own data is essential for domain-specific applications.

Whether you’re building a medical assistant or a customer support chatbot, fine-tuning the model with relevant data allows it to adapt to specialised vocabulary and contextual nuances.

You’ll need to feed the model with text data that closely resembles the type of output you want it to generate and gradually adjust it to align with your objectives.

Hyperparameter Adjustment

Optimal performance requires hyperparameter adjustments as you fine-tune the model. During fine-tuning, keep an eye on metrics like loss, accuracy, and BLEU score to guide adjustments.

To increase model efficiency and generalisation, hyperparameters like learning rate, batch size, and optimiser settings (e.g., Adam optimiser) can be adjusted. Fine-tuning the model involves a balance between high and low learning rates to avoid instability and delayed learning.

Scaling GPT Models for Production

Containerization

To deploy GPT models in production environments, containerization is essential. Docker and Kubernetes are popular tools for containerising models, ensuring that they can run in isolated environments that are easy to scale and manage.

With Kubernetes, you can automatically scale containers up or down based on traffic demands, making it an efficient option for large-scale deployment.

Distributed Computing

For large model deployment, cloud computing platforms such as AWS Sagemaker, Google Cloud AI, and Microsoft Azure are great options. These services provide scalable infrastructure for GPT model computations.

Distributed computing offers parallel training and inference, which is advantageous for huge datasets or high query volumes in production.

Model Versioning and Management

When working on large projects, model versioning(which indicates version control of data in machine learning) becomes essential. Using tools like MLflow or DVC (Data Version Control), you can track different versions of your model, the parameters used, and the training data.

Edge Deployment

Edge deployment is necessary for latency-sensitive applications like mobile apps and IoT devices. Deploying models directly to edge devices reduces the need for cloud data transfer, speeding reaction times.

However, edge devices have limited resources; thus, quantisation and model pruning are needed to reduce model size while maintaining accuracy.

Performance Optimization Techniques

Model Pruning

Model pruning involves eliminating unimportant weights or neurons from the training model. Remove these extraneous elements to minimise model size and speed up inference without losing performance. It’s useful in resource-constrained environments where latency and memory are critical factors.

Quantization

Another technique to optimise performance is quantisation, which reduces the precision of the model weights (e.g., from FP32 to INT8). This can drastically decrease the model’s memory footprint, enabling faster inference and reducing storage requirements.

Asynchronous Inference

For real-time applications, asynchronous inference speeds response. Offload inference tasks and process them simultaneously instead of waiting for the model to process each request. This strategy is useful for chatbots and virtual assistants that need fast, on-demand responses.

Host Setup and Environment Isolation

Optimise your hosting for GPT model deployment. Environment isolation (using Docker containers or virtual environments) cleanly manages dependencies and prevents service conflicts. A properly configured host environment can reduce deployment problems and boost overall system reliability.

Challenges in Building GPT Models

1.Data Collection

Building a high-performing GPT model requires access to vast amounts of high-quality, relevant data. This can be a major hurdle, as collecting such data can be costly and time-consuming, especially when it needs to be domain-specific.

Moreover, companies often struggle with gathering a diverse dataset that ensures the model doesn’t develop biases. Data collection can also present ethical concerns, particularly regarding privacy and consent.

How to Overcome:

Businesses can address this by leveraging publicly available datasets such as Common Crawl or OpenWebText. Building a custom dataset requires diversity and ethics, which can be achieved through synthetic data production or using crowdsourcing for data labelling.

Businesses should also frequently check data for bias and skewed representation to avoid reinforcing negative assumptions.

2.Overfitting

Overfitting occurs when a model performs well on its training data but fails to generalise to new, unseen data. This is a common issue when the dataset is small or lacks diversity.

Overfitting leads to poor performance in real-world applications and can severely affect a model’s reliability and usefulness.

How to Overcome

Businesses can use dropout layers, L2 regularisation, and early stopping (a type of regularisation) to reduce overfitting. Cross-validation is also valuable for evaluating model performance on unseen data, ensuring proper generalisation. Additionally, expanding and diversifying the training dataset plays a key role in reducing overfitting.

3. Computational Resource Constraints

Training big GPT models requires high-performance GPUs or TPUs. This can slow down smaller companies with limited hardware costs. The sheer scale of data and training time can also slow down the development process.

How to Overcome:

We discussed earlier how to solve this challenge;e, businesses can turn to cloud-based platforms like AWS, Google Cloud, or Azure, which offer scalable computing power on demand.

For reducing hardware costs, techniques such as model parallelism, mixed-precision training, and gradient accumulation can help distribute computational demands more efficiently.

4. Bias in Data

GPT models learn from the data they’re trained on, meaning any bias in the data can be learned and amplified by the model, irrespective of gender, race, or culture. This can lead to ethical concerns, particularly if the model is deployed in sensitive areas such as hiring, healthcare, or law enforcement.

How to Overcome:

Data audits, bias detection, and fairness-aware algorithms are required to ensure fairness and reduce bias.

Organisations can employ techniques such as adversarial training to train models in order to avoid the production of biased outputs. To ensure fairness, the development lifecycle should incorporate ethical testing and validation.

5. Model Size and Training Time

As GPT models grow in size, so does the time and cost involved in training them. Huge models, such as GPT-3, require immense resources, which can be a barrier for organisations without access to powerful infrastructure.

Larger models also tend to take longer to train, which slows down time to market for businesses looking to deploy quickly.

How to Overcome:

Gradient checkpointing, model trimming, and early stopping can reduce training time for large models. Distributing training across numerous GPUs and using cloud computing resources makes it possible to scale training without overburdening local infrastructure. Using smaller models or fine-tuning pre-trained models can also save time and resources.

Conclusion

Building a custom GPT model for your business is not just feasible; it’s within reach with the right knowledge, tools, and approach. The possibilities are endless, from automating repetitive duties to developing cutting-edge AI-powered services for your clients. However, building models, data gathering, and training can be intimidating without the necessary expertise.

If you’re looking to build a custom GPT model specifically for your business, whether for internal use or as a commercial product, Wegile can assist you. Our team specialises in Generative AI development services, creating custom solutions tailored to your specific vision and objectives.

We understand the nuances of building models that meet your business goals and ensure they are both scalable and efficient. Reach out to us today, and let’s make your AI-driven ambitions a reality!

FAQs

1. How to build a GPT model from scratch?

Building a GPT model involves three main stages:

- Managing Prerequisites: Prepare tools (Python, PyTorch, TensorFlow), datasets, and computational resources (GPUs, TPUs).

- Building the Model: Design the architecture (transformers, attention heads) and train with your preprocessed data.

- Post-Development: Fine-tune the model, deploy it on cloud infrastructure, and optimise for performance.

2. Can we build our own GPT?

Yes, but it requires significant resources and expertise. Key considerations include data availability, computational power (GPUs or TPUs), and deep learning knowledge. It’s often more efficient to partner with a generative AI development company that can guide you through the complex process.

3. How to train a GPT model?

- Data Preprocessing: Collect and clean your data, then tokenise it.

- Model Initialisation: Select a transformer architecture and configure hyperparameters.

- Training: Use GPUs for efficient training and adjust hyperparameters as needed.

- Fine-tuning: Adapt the model to specific tasks and evaluate using metrics like perplexity and accuracy.

- Deployment: Deploy the trained model to your application, optimising for performance.

4. What are the applications of GPT?

- Customer Support: Automates chatbots for real-time, context-aware responses.

- Content Creation: Generates blog posts, articles, and social media content.

- Text Summarisation: Condenses long-form content into digestible summaries.

- Language Translation: Translates content with accuracy and nuance.

- Market Analysis: Analyses customer feedback and trends for decision-making.

- Creative Writing: Assists in generating creative content like stories and scripts.

- Personalised Recommendations: Delivers tailored recommendations in e-commerce and streaming services.

Leave a Reply